In the digital backbone of every business—whether a small office computer room or a mid-sized IT hub—computer room cooling units are the unsung guardians of operational continuity. Servers, storage devices, and networking equipment generate constant heat; a single rack can produce 5–20kW of thermal energy, and even a slight deviation from optimal temperatures (18–24°C, per ASHRAE guidelines) can lead to hardware throttling, data corruption, or catastrophic downtime.

With the average cost of server downtime reaching $100,000 per hour (Gartner), it’s critical to get your cooling setup right. Yet, many organizations unknowingly make costly mistakes with their computer room cooling units—from poor selection to neglectful maintenance—that undermine efficiency, reliability, and longevity. In this post, we’ll unpack the most common errors and how to fix them, ensuring your cooling units deliver maximum value.

1. Common Mistakes in Selecting Computer Room Cooling Units

·Ignoring Heat Load Calculations

The single biggest mistake businesses make is skipping heat load calculations when choosing computer room cooling units. Heat load refers to the total amount of heat generated by all IT equipment, plus environmental factors like sunlight and insulation. Without this calculation, you’re essentially guessing—leading to either under-cooling (insufficient capacity to handle heat) or over-cooling (wasting energy on an oversized unit).

Under-cooling is a disaster waiting to happen. A mid-sized marketing firm in Chicago learned this the hard way: they installed a 30kW cooling unit for a computer room with 8 racks (total heat load of 45kW). Within months, hot spots emerged (reaching 29°C), causing servers to throttle during peak hours and leading to two 45-minute outages. Over-cooling is equally problematic: an accounting firm in Boston opted for a 60kW unit for a 35kW heat load. The unit short-cycled (frequently turning on and off) to avoid over-cooling the space, wearing down components and increasing energy bills by 38% compared to a properly sized system.

The fix is simple: conduct a thorough heat load audit. Sum the rated wattage of all equipment (found on labels or specs), add 10–20% for environmental factors, and choose a cooling unit with a capacity that matches this total (plus a 10% buffer for future growth). Tools like ASHRAE’s heat load calculators or consulting with a cooling specialist can streamline this process.

· Choosing the Wrong Type of Cooling Unit

Not all computer room cooling units are designed for the same environments. Choosing a unit ill-suited to your space, IT density, or climate leads to inefficiency and premature failure. The two most common types—air-cooled precision units and liquid cooling systems—serve distinct needs, yet many businesses mix them up.

Air-cooled precision units (the most common choice) are ideal for low-to-medium density racks (≤15kW) and small-to-medium computer rooms (50–500 sq ft). They’re cost-effective, easy to install, and maintain tight temperature control (±1°C). However, a cloud startup in Austin made the mistake of using air-cooled units for 4 high-density racks (20kW each) powering AI workloads. The units struggled to handle the concentrated heat, leading to constant hot spots and a 40% increase in energy use. They should have opted for liquid cooling systems, which conduct heat 4x more efficiently and are designed for ultra-dense setups (15kW+ per rack).

Conversely, liquid cooling is overkill for small, low-density computer rooms. A retail chain’s 3-rack server closet (12kW total load) installed a liquid cooling system, spending 3x more upfront than a compact air-cooled unit. The system required complex maintenance and wasted energy, as it was engineered for far higher heat loads. The key takeaway: match the cooling unit type to your IT density and space—air-cooled for low-to-medium density, liquid for high-density, and portable units for temporary or edge setups.

2. Installation-Related Mistakes That Undermine Performance

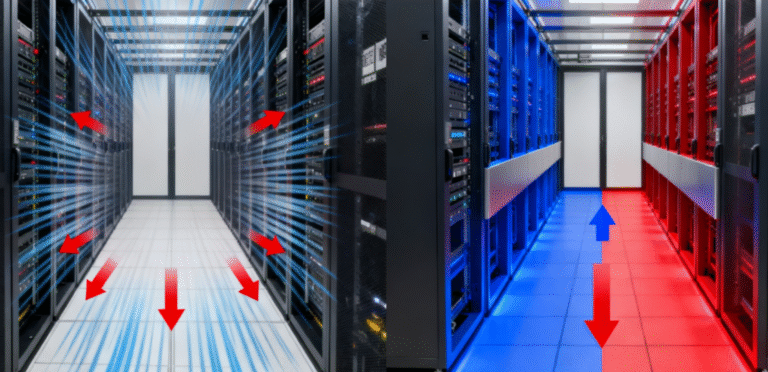

·Incorrect Placement of Cooling Units

Even the best computer room cooling units fail if placed incorrectly. Common placement errors include positioning units too close to walls or racks (blocking airflow), placing them in corners (creating stagnant hot zones), or orienting them so cool air doesn’t reach server intakes.

A logistics company in Atlanta installed two 50kW precision cooling units against the back wall of their computer room, with racks positioned directly in front. The units’ air intakes were blocked by the racks, reducing airflow by 30% and creating hot spots at the front of the room. After repositioning the units to the side walls (with clear air paths to the racks), cooling efficiency improved by 25%, and hot spots disappeared. Another common error is placing units near heat sources like windows (exposed to sunlight) or HVAC vents—this forces the unit to work harder to compensate for extra heat, increasing energy use and wear.

The rule of thumb: ensure cooling units have at least 2–3 feet of clearance on all sides, position them to direct cool air toward server intakes (aligning with cold aisles), and avoid heat sources. For rooms with raised floors, install units to leverage underfloor air distribution, ensuring cool air flows upward to rack intakes.

· Cutting Corners on Installation Quality

Skimping on professional installation to save money is a costly mistake. Improper installation can lead to refrigerant leaks, poor airflow, electrical issues, or even water damage (for water-cooled units). A financial services firm in Miami hired a general HVAC contractor (not a specialist in computer room cooling) to install their 40kW chilled water unit. The contractor incorrectly sized the refrigerant lines, leading to a slow leak that went undetected for months. By the time the issue was found, the unit’s cooling capacity had dropped by 20%, and the compressor was damaged—costing $15,000 in repairs and downtime.

Professional cooling specialists understand the unique requirements of computer room systems: proper refrigerant charging, precise airflow calibration, and compliance with electrical and safety standards. They also test the system post-installation to ensure it meets temperature and humidity targets. While professional installation may cost 10–15% more upfront, it avoids costly repairs and ensures your unit operates at peak efficiency from day one.

3. Maintenance Mistakes That Shorten Lifespan

·Neglecting Regular Filter Cleaning/Replacement

Clogged air filters are the #1 cause of reduced efficiency in computer room cooling units. Filters trap dust, pollen, and debris, but over time, they become blocked—restricting airflow, forcing the unit to work harder, and increasing energy use. A tech startup in Seattle ignored filter maintenance for 6 months; by the time they checked, filters were 80% clogged, reducing airflow by 35% and causing the unit to run 24/7 (instead of cycling on as needed). This increased their cooling energy bill by 42% and shortened the fan motor’s lifespan by 3 years.

The fix is simple: clean or replace filters every 1–3 months (more frequently in dusty environments). Most computer room cooling units have easily accessible filters—set calendar reminders to check them, or invest in smart units that send alerts when filters are dirty. For high-dust environments (e.g., industrial areas), use high-efficiency filters (MERV 11 or higher) to trap more particles and reduce maintenance frequency.

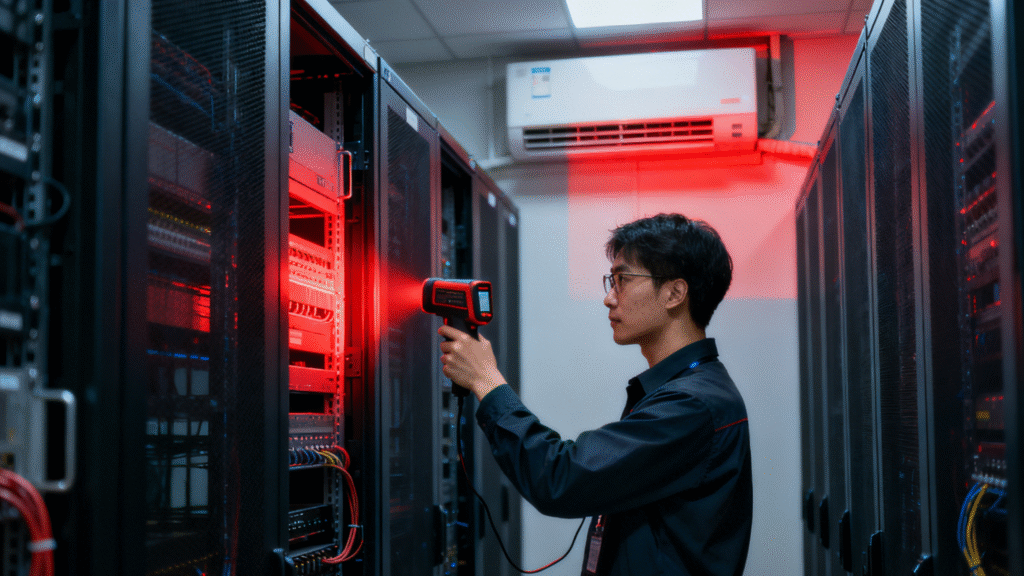

·Skipping Routine Inspections and Calibration

Many businesses treat computer room cooling units as “set-it-and-forget-it” devices, skipping routine inspections until something breaks. This is a mistake—small issues (like a failing sensor or low refrigerant) can escalate into major failures if left unaddressed. A hospital’s computer room in Ohio went 18 months without inspecting their cooling units. During a routine audit, technicians discovered a faulty temperature sensor that was giving incorrect readings (reporting 22°C when the actual temperature was 26°C). The unit wasn’t cooling enough, putting patient data servers at risk of overheating.

Routine inspections (quarterly for most units) should include: checking refrigerant levels, testing fans and compressors, calibrating temperature/humidity sensors, inspecting electrical connections, and cleaning coils. Annual professional servicing is also recommended to deep-clean components and identify potential issues. These inspections cost $200–$500 per unit but can prevent $10,000+ in repairs and downtime.

4. Operation Mistakes That Waste Energy

· Setting Temperatures Too Low (or Too High)

There’s a common misconception that “colder is better” for server rooms, but setting temperatures below 18°C wastes energy and offers no additional protection. A software company in Portland set their computer room cooling units to 16°C, believing it would extend server life. In reality, this increased energy use by 28% (since the unit had to work harder to maintain the lower temperature) without any measurable benefit—ASHRAE confirms that servers operate reliably within 18–24°C.

Conversely, setting temperatures above 24°C risks overheating. A manufacturing firm in Detroit set their units to 26°C to save energy, leading to frequent server throttling and a 10% increase in hardware errors. The sweet spot is 20–22°C: this balances efficiency and hardware protection. Additionally, avoid frequent manual adjustments—use the unit’s programmable settings to maintain consistent temperatures, and leverage smart features (if available) to adjust based on real-time heat load.

·Overlooking Humidity Control

Computer room cooling units don’t just cool—they regulate humidity (optimal range: 40–60%). Yet, many businesses disable humidity control or set it incorrectly, leading to costly issues. High humidity (>60%) causes corrosion of circuit boards and water condensation, while low humidity (<40%) increases static electricity (which can short-circuit components). A law firm in Florida disabled their cooling unit’s dehumidifier to save energy, leading to humidity levels of 75%—corroding server motherboards and causing a 3-hour outage. A tech company in Arizona ignored humidification, leading to 30% humidity and static-induced data corruption.

The solution: keep humidity control enabled and calibrated to 40–60%. Modern computer room cooling units have dual-stage humidity control (dehumidifiers + humidifiers) to maintain this range automatically—don’t disable this feature. If your unit lacks humidity control, invest in a standalone humidifier/dehumidifier to complement it.

How to Fix (and Avoid) These Mistakes?

The good news is that most mistakes with computer room cooling units are easily fixable:

- ·Conduct a heat load audit to right-size your unit—replace oversized/undersized units or add supplementary cooling if needed.

- ·Match unit type to your needs: air-cooled for low-to-medium density, liquid for high-density, portable for edge setups.

- ·Reposition units for optimal airflow, ensuring clear paths to server intakes and avoiding heat sources.

- ·Schedule professional maintenance: quarterly inspections, monthly filter checks, and annual servicing.

- ·Calibrate settings to 20–22°C and 40–60% humidity, and avoid manual adjustments.

- ·Invest in smart features: real-time monitoring, predictive maintenance alerts, and load-based cooling adjustments.