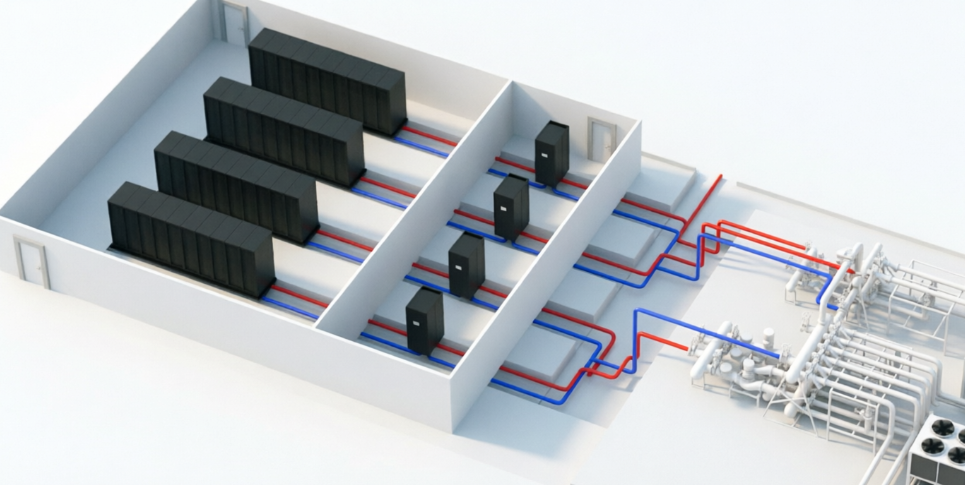

A CDU for data center is the central heat-exchange module of a data center liquid cooling system. It circulates coolant through cold plates that capture heat directly from CPUs and GPUs, then transfers that heat to the facility’s primary cooling loop.

When selecting a CDU for data center deployment, operators must navigate a complex landscape of technical specifications, architectural choices, and operational requirements. This guide provides a systematic framework for choosing the right CDU for your specific environment.

Step 1: Assess Thermal Capacity and Power Density Requirements

Thermal capacity is the cornerstone of any CDU for data center. Your CDU must be capable of handling the total design power of the system—the amount of heat your servers and GPUs generate. As a rule of thumb, plan for the heat load of your most power-dense racks and the total load per compute pod.

Modern CDUs span a remarkable range of capacities. For small to medium deployments, in-rack and in-row CDUs typically handle 70 kW to 600 kW. Facility-scale units cover 2 MW to 4 MW per unit, while hyperscale-class CDUs can reach up to 10 MW per module and beyond—Carrier recently introduced CDUs covering 1.3 MW to 5 MW for hyperscale data centers.

When sizing a CDU for data center, consider not just current heat loads but projected future growth. AI workloads intensify rapidly, and recommissioning a cooling system is far more expensive than overprovisioning initially.

Step 2: Calculate Flow Rate and Pressure Head

Flow rate is directly tied to cooling performance. Modern AI and HPC clusters demand higher flow rates than ever—typically 1.5 to 2 liters per minute per kilowatt of heat. Underestimating flow requirements leads to insufficient heat removal and thermal throttling, directly impacting GPU performance and training times.

The CDU’s pumps must generate enough pressure head to push coolant through every loop, bend, and cold plate in your system. System designers often undercalculate pressure drop, especially in retrofits where existing piping configurations were not designed for liquid cooling.

Step 3: Choose Between Liquid-to-Liquid and Liquid-to-Air CDUs

CDUs generally fall into two primary architectures:

Liquid-to-Liquid (L2L) CDUs utilize heat exchangers to transfer heat from the IT coolant loop to the facility chilled water system. They are best deployed for large-scale or HPC data centers with existing chilled water infrastructure. L2L CDUs offer superior cooling efficiency but require facility water systems and proper water treatment protocols.

Liquid-to-Air (L2A) CDUs expel heat directly into the ambient air within the data center via integrated fans and coils. They are suitable for smaller deployments or facilities without chilled water access, though they increase data hall heat load.When selecting an L2A CDU for data center, be aware that it will raise your room’s ambient temperature.

Step 4: Evaluate CDU Types by Deployment Location

Beyond the heat exchange method, CDUs are classified by where they sit within the facility:

Rack CDUs mount directly within individual server racks, providing dedicated cooling for that specific enclosure. These are ideal for high-density racks or retrofits where row-level integration is impractical. Vertiv’s CoolChip CDU 70 and CDU 100 exemplify this category, purpose-built for AI infrastructure.

In-Row CDUs sit between racks within a hot/cold aisle configuration, serving multiple racks from a single unit. This represents the most common deployment pattern for enterprise AI clusters.For most new projects, an in‑row CDU for data center offers the best balance of density and serviceability.

Step 5: Understand Single Phase vs. Two Phase Technology

Single-phase direct-to-chip cooling is currently the dominant choice for AI data centers. In this architecture, coolant absorbs heat from processors and returns to the CDU as a liquid, where it is cooled and recirculated. The technology is mature, well-understood, and supported by a broad ecosystem of vendors.

Two-phase direct-to-chip cooling represents an emerging alternative. Coolant changes from liquid to vapor as it absorbs heat, then condenses back to liquid at the CDU. The phase change enables dramatically higher heat transport capacity with lower flow rates and reduced pump energy. However, two-phase systems introduce higher costs and regulatory considerations, particularly around refrigerants and their global warming potential.

For most enterprise deployments today, single-phase L2L CDUs remain the safest, most cost-effective choice of CDU for data center. Two-phase technology is best suited for the highest-density AI and HPC environments where every watt of cooling efficiency matters and capital budgets can accommodate specialized infrastructure.

Step 6: Specify Redundancy and Reliability Requirements

N+1 redundancy—adding one extra CDU beyond what is required to meet the full thermal load—has become the industry-standard minimum for cooling system design. This approach allows continued operation during component failure, planned maintenance, or shifting loads. For mission-critical AI workloads, operators increasingly specify 2N CDU configurations, though this comes with significant space and capital costs.

Beyond unit-level redundancy, look for a CDU for data center with redundant pumps (N+1 or 2N configurations), redundant strainers and sensors, and dual power feeds. Open-frame designs that make pumps, filters, and controls easily accessible reduce downtime during maintenance.

Step 7: Plan for Sustainability and Long-Term Scalability

When evaluating CDUs, consider support for higher leaving water temperatures. Systems that operate at up to 40°C coolant temperatures maximize free cooling hours, reducing chiller energy consumption and enabling heat reuse for district heating or other applications. Heat recovery capability is rapidly moving from a nice-to-have feature to a must-have requirement, particularly in regions with carbon pricing or aggressive sustainability targets.

Scalability requires similar forethought. The CDU for data center market has surged, with direct liquid cooling growing at approximately 20% to 30% CAGR and the market projected to reach nearly $6 billion by 2029. This rapid growth has attracted around 40 vendors to the CDU space, ranging from global powerhouses to niche specialists. While competition drives innovation, it also creates risk of vendor lock-in or stranded assets.

Specify CDU for data center with modular expansion paths, open control architectures, and compatibility with multiple coolant types. Redundant capacity provisions allow you to scale rack densities without replacing the entire cooling infrastructure. Ask vendors for reference deployments at similar scale to your planned facility, and verify long-term parts availability and service support in your region.

Final Considerations: AI, HPC, and the Future of CDUs

AI training workloads surge and cycle unpredictably. GPUs can increase or decrease performance rapidly, creating immediate temperature spikes. CDUs for AI environments must adjust pump speeds, flow rates, and valve positions continuously to distribute thermal load evenly. This requires advanced control logic, not just oversized pumps.

HPC clusters often represent the highest density deployments in any facility. For these environments, consider dedicated CDUs per compute pod rather than shared units across multiple pods. This approach contains thermal failure domains, simplifies troubleshooting, and aligns cooling capacity with specific workload characteristics.

Remember: the CDU for data center is not just a pump with a heat exchanger. It is the intelligence layer that transforms liquid from a medium to a managed resource. Choosing the right CDU for data center deployment is one of the most consequential infrastructure decisions you will make in the AI era.